Get involved with community repair data in a simple online task - fault categorisation with FaultCat.

Have a go here: FaultCat ![]() or read on for more info

or read on for more info ![]()

Volunteers collect data about the devices that are brought into our repair events. The data is uploaded to restarters.net and shared as open data.

We held two data dives this year where other volunteers sorted repair records into types of faults. This helps us to report and visualise our data.

We’d like to review and improve the categorisations - and this is where you can help!

FaultCat

FaultCat is a web app that collects opinions about the type of faults in computers brought to community events such as Restart Parties and Repair Cafés.

What do I do?

FaultCat loads a single random record that describes a faulty device brought along to a repair event. Here’s what you can do:

- First, read the problem.

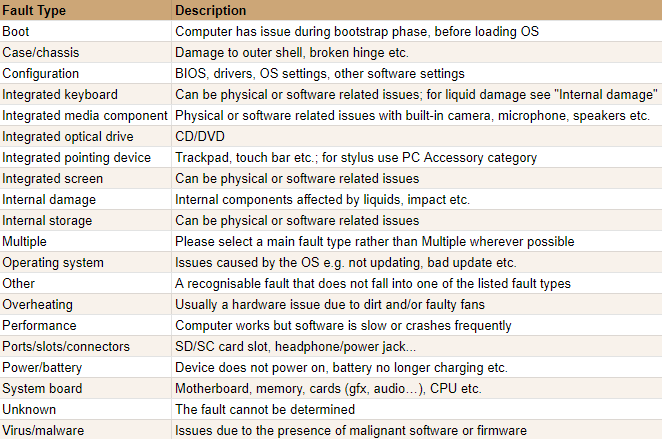

- Then read the fault type.

- If you agree with the fault type, press the ‘Yes / possibly’ button or ‘Y’ key.

- If you are not sure, press the ‘I don’t know, Fetch another repair’ button or ‘F’ key.

- If you think the suggestion is wrong, press ‘Nope, let me choose another fault’ or ‘N’ key and pick one of the fault type buttons. Then press ‘Go with [your new fault type]'’ or ‘G’ key.

That’s it! Keep going through as many as you like - we think it’s a really fun way to discover the data. Remember - every single fault you see is an item brought to a repair event and looked at by a fixer. ![]()

Why computers?

All kinds of small electrical and electronic devices are brought to repair events. Right now we are focused on computer repair data – desktops, laptops and tablets - because the EU is set to draft rules about repairability. The data we collect at repair events and through apps like Fault Cat will provide policymakers with useful information.

Read more about why we collect data and our previous work on why computers fail.

FAQ

click here to see Frequently Asked Questions

Do I need an account?

Do I need an account?

No - you don’t need to sign up to restarters.net to play with FaultCat. We’d love it if you did create an account and tell us what you think of FaultCat. You can then also get involved in events, data collection and discussions about community repair.

What if there’s not enough info to decide on a fault type?

What if there’s not enough info to decide on a fault type?

Sometimes it can be hard to choose a fault type because there is not a lot of information recorded. The data has come from a lively, sociable community repair event where volunteers are busy trying to fix things and don’t always have the time to write down a lot of the detail.

Don’t worry if you can’t decide - just press “I don’t know”. It is in fact very useful for us to know where we lack information as we are looking for ways to improve our data collection.

What do you do with my answers?

What do you do with my answers?

They are pooled together to see if we can reach consensus on the problem we saw during a particular repair attempt. Once we have a good level of confidence in the faults, we can use this information to help complement existing knowledge on why things fail and what are the barriers to repair.This will feed into campaign work for the Right to Repair.

This is cool! Any other stuff I can do?

This is cool! Any other stuff I can do?

We’re working on more repair data apps - stay tuned! In the meantime, there are lots of ways to get involved with our data work.

What if I find something weird or have a suggestion?

What if I find something weird or have a suggestion?

Please share it in this discussion ![]()

![]() (you will need an account to do so)

(you will need an account to do so)